Macro for Calculating Bootstrapped Confidence Intervals About a Kappa Coefficient | Semantic Scholar

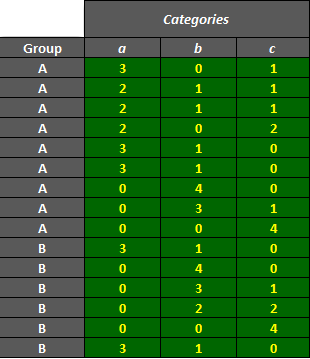

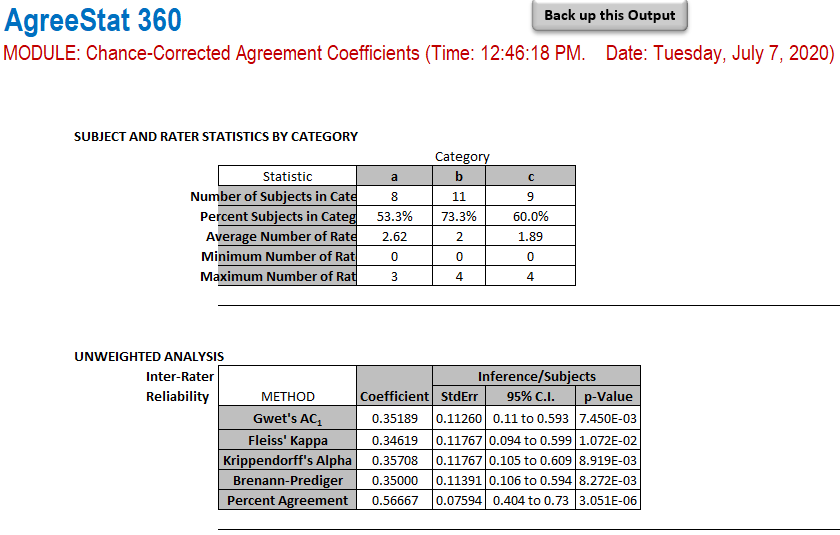

AgreeStat/360: computing agreement coefficients (Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) by sub-group with ratings in the form of a distribution of raters by subject and category

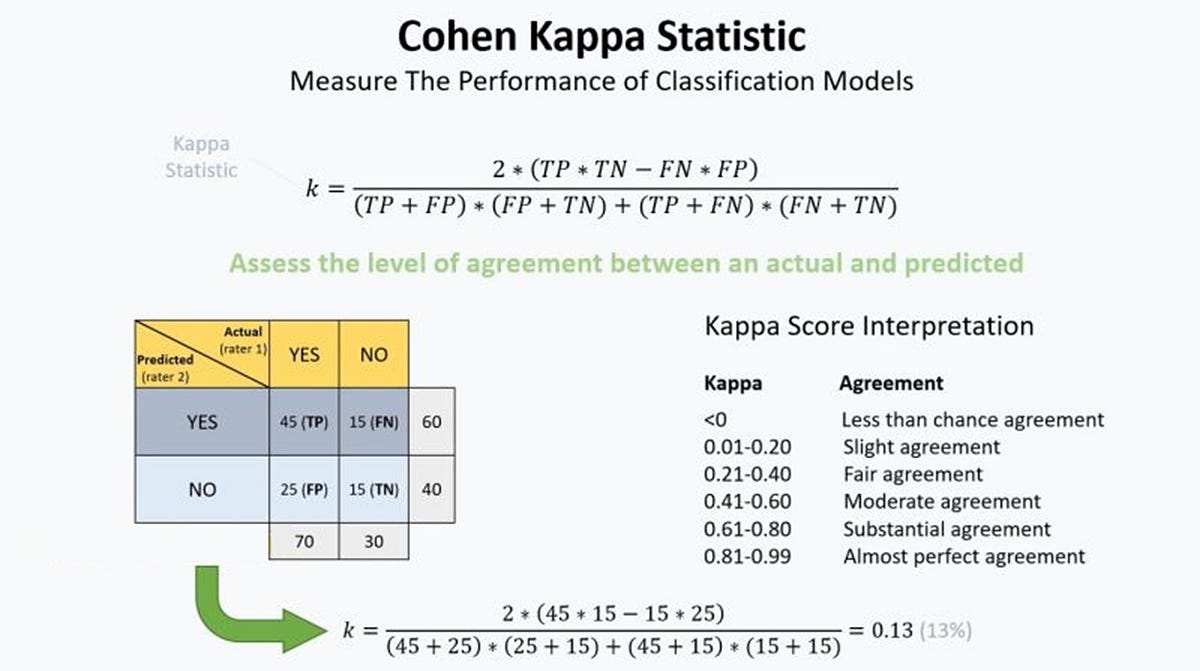

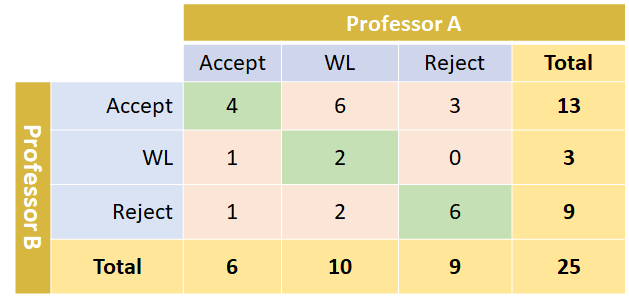

Multi-Class Metrics Made Simple, Part III: the Kappa Score (aka Cohen's Kappa Coefficient) | by Boaz Shmueli | Towards Data Science

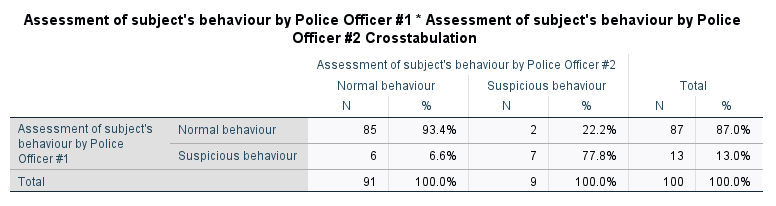

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of the output using a relevant example | Laerd Statistics

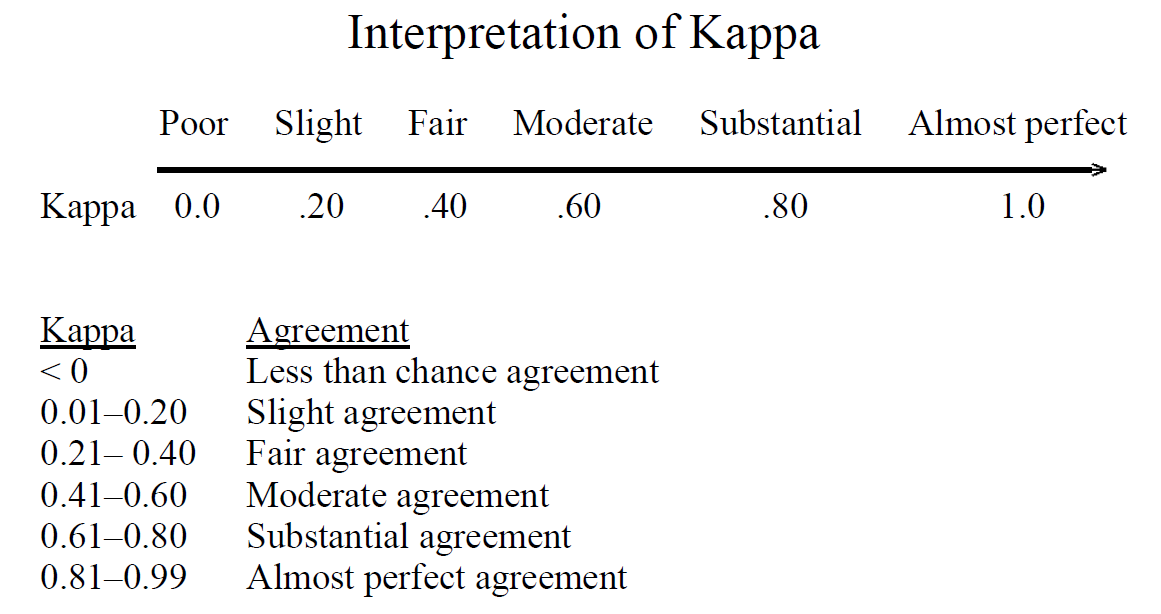

Using appropriate Kappa statistic in evaluating inter-rater reliability. Short communication on “Groundwater vulnerability and contamination risk mapping of semi-arid Totko river basin, India using GIS-based DRASTIC model and AHP techniques ...

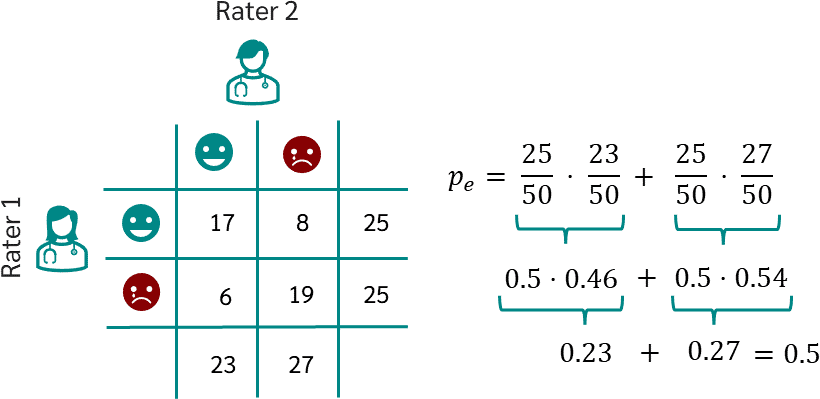

Cohen's Kappa: What it is, when to use it, and how to avoid its pitfalls | by Rosaria Silipo | Towards Data Science

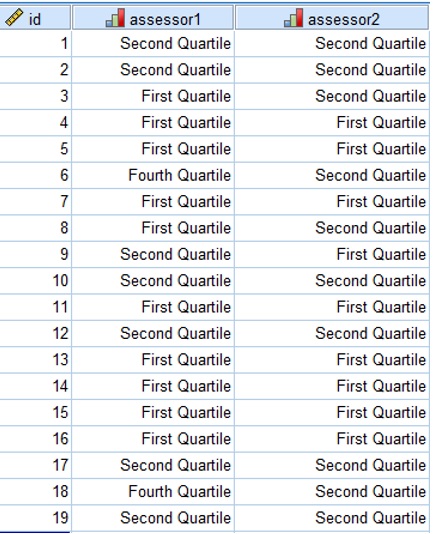

Inter-Annotator Agreement (IAA). Pair-wise Cohen kappa and group Fleiss'… | by Louis de Bruijn | Towards Data Science

The overall Fleiss Kappa agreement on individual stage categories in... | Download Scientific Diagram

AgreeStat/360: computing agreement coefficients (Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) with ratings in the form of a distribution of raters by subject and category